Duy Nguyen, from the Interactive Machine Learning department, and colleagues from the University of Oldenburg, Max Planck Research School for Intelligent Systems, the University of Texas at Austin, the University of California San Diego, and other institutions presented a full paper and a workshop paper at NeurIPS 2023. NeurIPS is considered one of the premier global conferences in the field of machine learning. The conference took place in New Orleans, USA, from December 10th to 16th, 2023, with an overall acceptance rate of 26.1%.

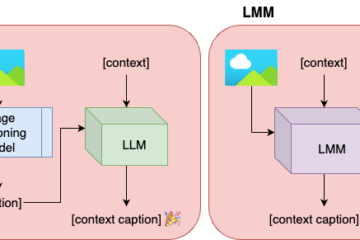

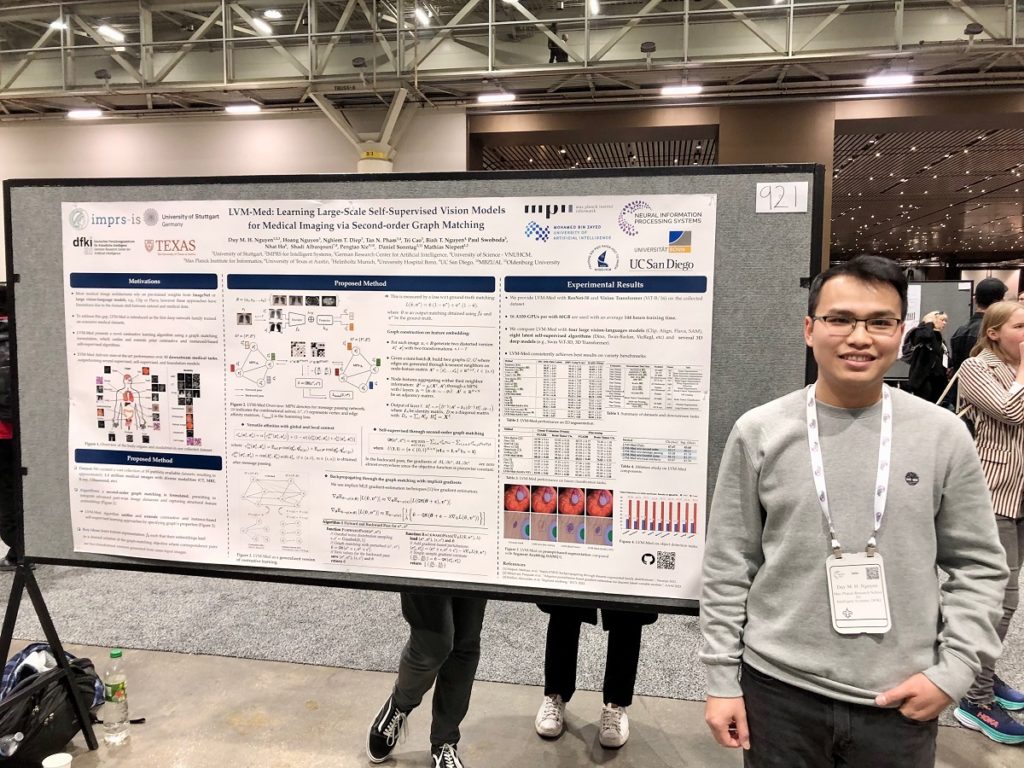

The first paper “LVM-Med: Learning Large-Scale Self-Supervised Vision Models for Medical Imaging via Second-order Graph Matching” introduces LVM-Med, a novel family of deep networks trained on a large-scale dataset of approximately 1.3 million medical images from 55 publicly available datasets (large vision model), encompassing various organs and modalities such as CT, MRI, X-ray, and Ultrasound. The authors address the challenge of domain shift between natural and medical images, proposing a self-supervised contrastive learning algorithm for fine-tuning pre-trained models. This algorithm integrates pair-wise image similarity metrics, captures structural constraints through a graph-matching loss function, and allows efficient end-to-end training using modern gradient estimation techniques. LVM-Med is evaluated on 15 medical tasks, demonstrating superior performance compared to state-of-the-art supervised, self-supervised, and foundation models. Notably, for challenging tasks like Brain Tumor Classification or Diabetic Retinopathy Grading, LVM-Med achieves a 6-7% improvement over previous vision-language models while using only a ResNet-50. Pre-trained models are made available to the community.

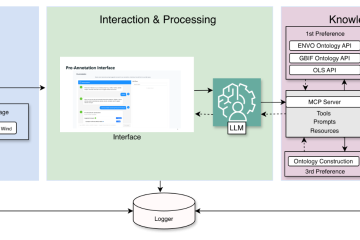

The second paper, “On the Out of Distribution Robustness of Foundation Models in Medical Image Segmentation”, accepted at the Workshop on robustness of zero/few-shot learning in foundation models (R0-FoMo), investigates the challenge of constructing robust models in medical imaging that can effectively generalize to test samples under distribution shifts. In particular, the authors compare the generalization performance of various pre-trained models after fine-tuning on the same in-distribution dataset, finding that foundation-based models exhibit better robustness than other architectures. The study also introduces a new Bayesian uncertainty estimation for frozen models, using it as an indicator to characterize the model’s performance on out-of-distribution (OOD) data, which proves beneficial for real-world applications. The experiments highlight the limitations of current indicators like accuracy on the line or agreement on the line, commonly used in natural image applications, and underscore the promise of the introduced Bayesian uncertainty, where lower uncertainty predictions tend to correspond to higher out-of-distribution (OOD) performance.

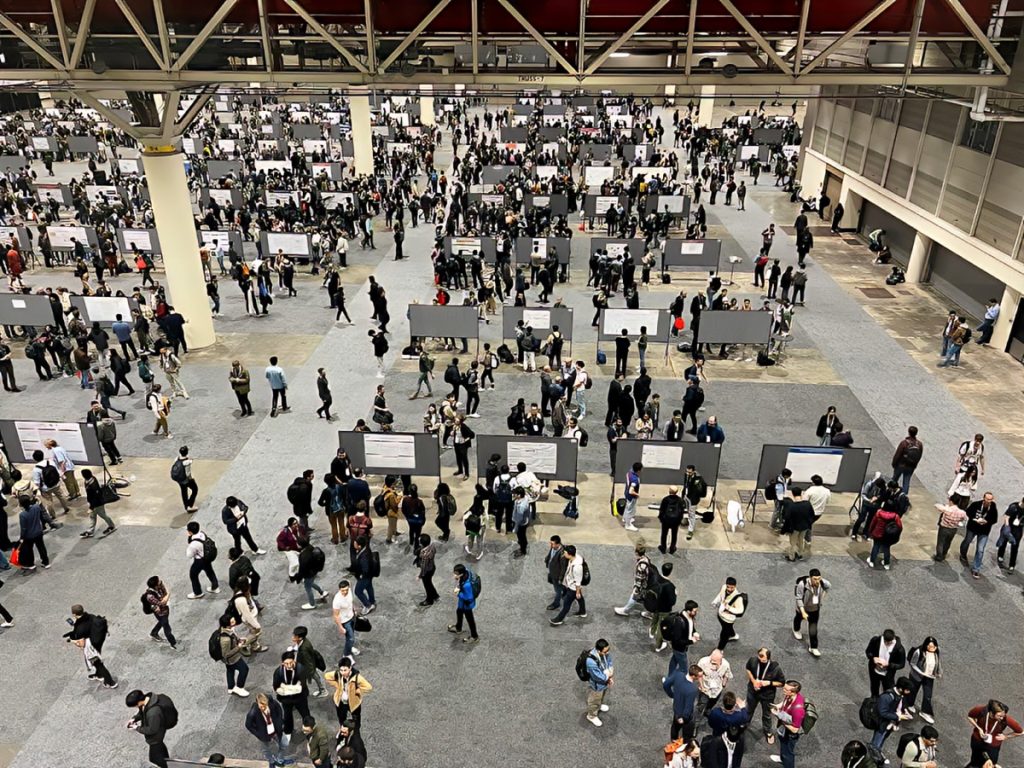

Like many previous NeurIPS conferences, NeurIPS-2023 this year features a diverse program with several invited speakers, 2,773 accepted posters, 14 tutorials, and 58 workshops. Among these, Duy reports important workshops for IML, for example, Foundation Models for Decision Making, Optimal Transport and Machine Learning, XAI in Action: Past, Present, and Future Applications, and Medical Imaging meets NeurIPS. Furthermore, our department connected and established collaboration for upcoming projects with leading groups in machine learning and bio-medical research at Harvard University and Stanford University.

Duy Nguyen with the poster explaining his paper at NeurIPS 2023

References

Nguyen, Duy MH, et al. “LVM-Med: Learning Large-Scale Self-Supervised Vision Models for Medical Imaging via Second-order Graph Matching.” arXiv preprint arXiv:2306.11925 (2023).

Nguyen, Duy MH, et al. “On the Out of Distribution Robustness of Foundation Models in Medical Image Segmentation.” arXiv preprint arXiv:2311.11096 (2023).