László Kopácsi from the Interactive Machine Learning (IML) Department at DFKI presented three demos and a workshop paper at the 33rd IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR), which took place from March 21 to 25, 2026, in Daegu, Korea. IEEE VR is a leading international venue for research on virtual, augmented, and mixed reality, as well as 3D user interfaces.

At this year’s conference, IML presented research on gaze interaction, eye-tracking support, and immersive human-in-the-loop machine learning. The work focused on improving the usability and transparency of XR systems, both for developers building gaze-based applications and for users interacting with eye-tracking and machine learning technologies in virtual environments.

László Kopácsi presenting the VR demos.

One of the presented demos, GazeDrift, is a serious game designed to help users understand common eye-tracking problems in XR. Through a balloon-popping game, it makes issues such as jitter, systematic gaze shifts, reduced peripheral accuracy, and the “Midas touch” problem easier to recognize and troubleshoot in an intuitive, hands-on way. ➜ Video

A second contribution, GTK: An Open-Source Toolkit for Gaze-based Interaction in XR, introduced a reusable Unity toolkit for building gaze-aware XR applications. The toolkit includes modular components such as gaze-based interactables, spatial context menus, onboarding elements, visual attention monitoring, and tools for checking and improving eye-tracking accuracy, helping developers integrate gaze interaction more easily into training and industrial XR scenarios. ➜ Video

This work was complemented by the GTK workshop paper, which presents the broader idea behind the toolkit as an open and extensible framework for active gaze interaction and passive attention monitoring in XR. The paper highlights how the toolkit supports multimodal interaction, structured onboarding, and practical deployment across different research and application scenarios. ➜ Source code on Github

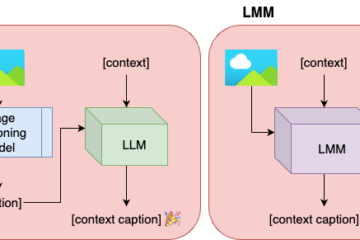

The third demo, User-Centric Active Learning through Immersive Visualization, explored how virtual reality can support human involvement in machine learning. The system allows users to inspect and annotate data points directly inside an immersive 3D embedding space, while visual cues such as uncertainty, density, diversity, and class coverage help them make more informed annotation decisions and iteratively improve the model. ➜ Video

László Kopácsi with Arnulph Fuhrmann and Kristoffer Waldow from TH Köln, who are involved in the REACH and VIRAMM open-call projects of MASTER-XR

References:

🎥 GazeDrift demo

Kopácsi et al. (2026). GazeDrift: A Balloon-Popping Serious Game for Eye Tracking Troubleshooting in VR. IEEE VR’26 Demo ➜ Video

🎥 GTK demo

Kopácsi et al. (2026). GTK: An Open-Source Toolkit for Gaze-based Interaction in XR. IEEE VR’26 Demo

➜ Video

📄 GTK Unity Package

Kopácsi et al. (2026). GTK: A Gaze-Based Interaction Toolkit in XR. IEEE VR’26 GEMINI Workshop

➜ Source Code on Github

🎥 Active Learning demo

Saghir et al. (2026). User-Centric Active Learning through Immersive Visualization. IEEE VR’26 Demo ➜ Video