Omar Adjali from the Interactive Machine Learning (IML) Department at DFKI presented our paper, “Aligning Instruction-Tuned LLMs for Event Extraction with Multi-objective Reinforcement Learning”, at the 48 th European Conference on Information Retrieval (ECIR 2026), held from 29 March to 2 April 2026 in Delft, The Netherlands.

The European Conference on Information Retrieval (ECIR) 2026 is a leading international conference in the field of information retrieval, bringing together leading researchers and practitioners from academia and industry. The conference had an acceptance rate of 34% in 2026.

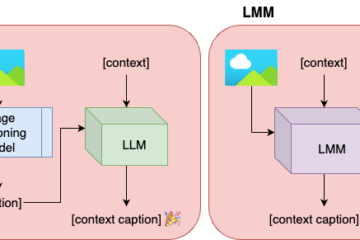

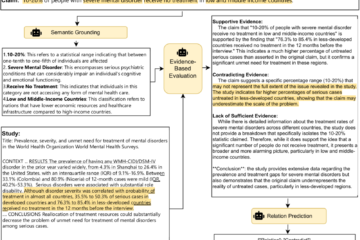

The study addresses a reinforcement learning-based approach to improve LLM event extraction using tailored rewards for enforcing correct format, semantic consistency, and task alignment. Despite the success of instruction-tuned Large Language Models (LLMs), current methods often produce inconsistent formats, semantically drifted outputs, and event types that deviate from predefined schemes. These issues arise partly because supervised fine-tuning relies on static loss functions that fail to reflect task-specific objectives such as schema alignment. To address these limitations, we introduce a reinforcement learning framework based on Group Relative Policy Optimization (GRPO) designed to optimize instruction-tuned LLMs for event and argument extraction.