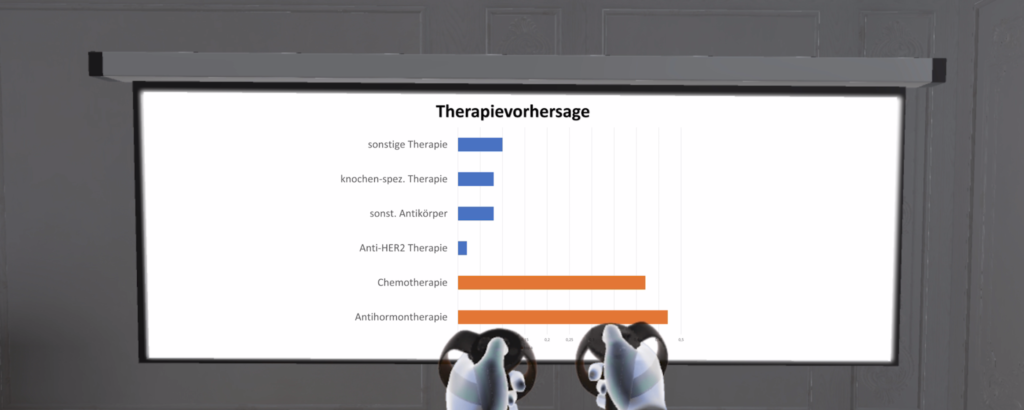

As part of the Clinical Data Intelligence project we present a speech dialogue system that facilitates medical decision support for doctors in a virtual reality (VR) application. The therapy prediction is based on a recurrent neural network model that incorporates the examination history of patients. A central supervised patient database provides input to our predictive model and allows us, first, to add new examination reports by a pen-based mobile application on-the-fly, and second, to get therapy prediction results in real-time. We visualize patient records, radiology image data, and the therapy prediction results in VR.

Categories: HCI & Virtual Reality